Cite-or-decline

CORE's AI can answer only when it can ground the claim in a file, regulation, or controlled system record. If it cannot cite, it declines.

§ AI for credit · Built for examination

CORE supports credit analysis, memo generation, and underwriting review with AI that is replayable by default. Every generated claim must cite a source, every generation is frozen against the loan file, and the analyst keeps the decision.

Cite-or-declineExaminer-replayableModel-agnostic by architecture

DSCR trend supported by audited financials and policy threshold review.

§ AI · Built for examination, not vapor

CORE treats generation as a controlled credit workflow: source-bound claims, frozen snapshots, analyst-owned decisions, and replayable model records.

CORE's AI can answer only when it can ground the claim in a file, regulation, or controlled system record. If it cannot cite, it declines.

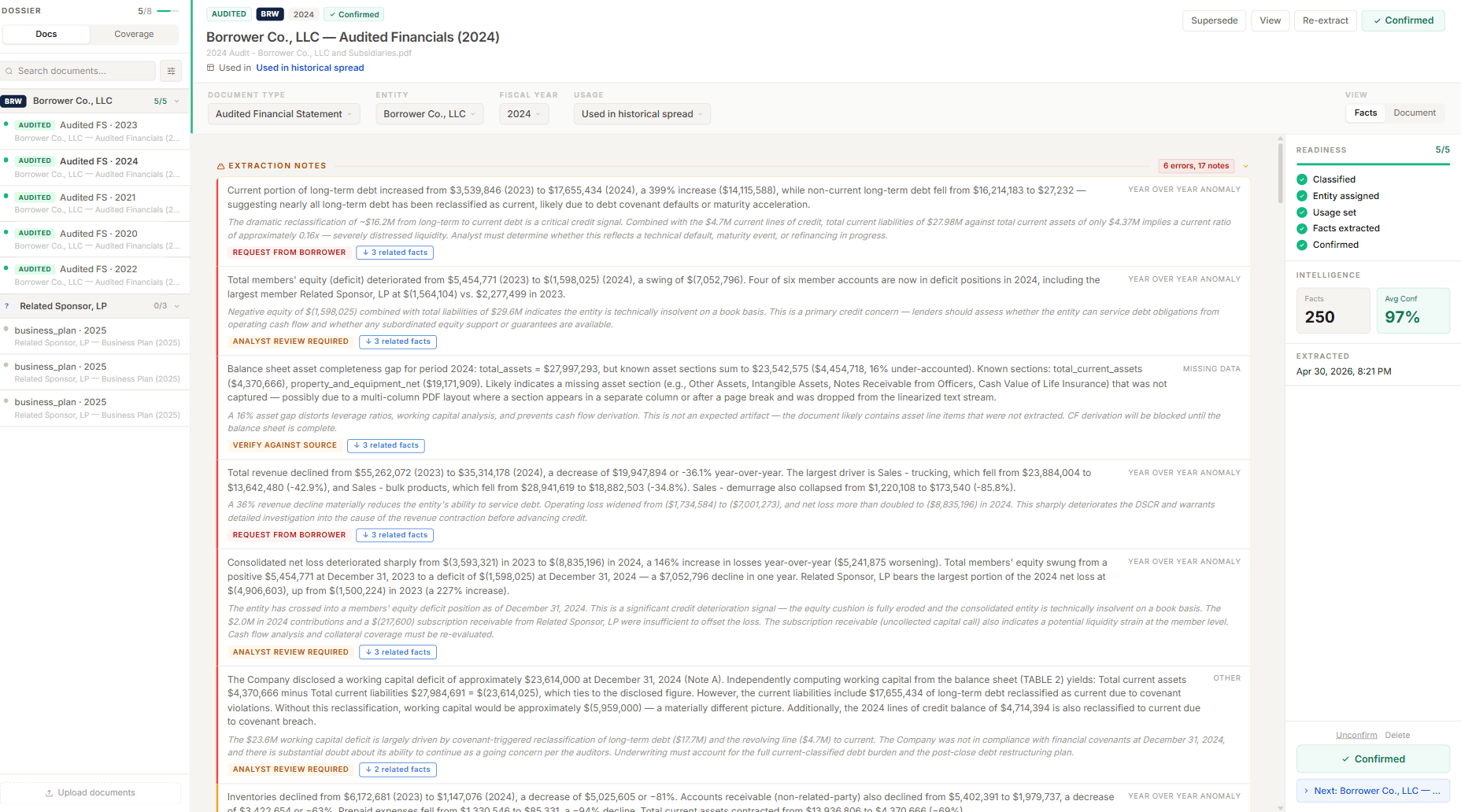

Every fact, figure, and paragraph resolves to a source span. CORE compares facts across documents and years, then flags YoY anomalies, balance-sheet gaps, and debt reclassifications before the spread is opened.

The model drafts the signal. The analyst accepts, edits, or rejects it. CORE keeps the human decision separate from the generated recommendation.

Model, version, inputs, output, and analyst overrides are frozen against the loan file, so the memo can be replayed after the decision is made.

Section drafting routes through deterministic head-selection across CORE's credit, policy, program, and operations divisions, with agent-level actor and timestamp logging.

No model is pinned in code. Every generation resolves through a platform-controlled registry, so upgrading the model fleet does not retroactively alter prior outputs.

Current portion of long-term debt rose from $3,539,846 (2023) to $17,655,434 (2024) — a 399% increase. Non-current LTD fell from $16.2M to $27,232, suggesting near-total reclassification to current. Likely covenant default or maturity acceleration; liquidity ratio collapses to 0.16x against $4.37M current assets.

The dramatic reclassification of ~$16.2M from long-term to current debt is a critical credit signal. Combined with $4.7M current lines of credit, total current liabilities of $27.98M against $4.37M current assets implies severe distress. Analyst must determine whether this reflects a technical default, maturity event, or refinancing in progress.

Generated automatically at intake. The model wrote the credit signal; the analyst reviews the citation. Every figure resolves to a span on the underlying audited PDF.

Every route, support package, proposal, and analyst decision is preserved as part of the credit record.

§ AI · Grounded, not guessed

CORE does not cite a loose PDF library. Regulations, Federal Register rules, and agency SOPs are chunked, domain-tagged, timestamped, and versioned, so a memo citation resolves to the rule text that was current when the claim was generated.

Three program corpora live today: USDA OneRD, SBA 504, SBA 7(a). HUD, EB-5, and C-PACE corpora are scheduled next.

§ Search-driven · Examiner FAQ

Answered honestly, with the same vocabulary an examiner would use. No marketing scaffolding.

CORE uses a cite-or-decline policy. Every AI-generated paragraph, figure or classification must cite the source document and span it derives from. If the model can't ground a statement in a file under the analyst's control, it declines rather than asserts.

Citations are surfaced inline. Reviewing the citation — not the prose — is the analyst workflow. The model writes; the analyst checks the source span.

Generation is bounded to the deal file. The model has no access to the open web at draft time, and no synthetic facts can be introduced from outside the cited corpus.

Every generation creates a frozen snapshot — model identifier, version, seed, inputs (the cited file spans), output, and any analyst overrides. The snapshot is hashed and stored against the loan's audit trail.

Examiner replay opens the generation snapshot in read-only context. The examiner sees what the model saw, what it produced, and what the analyst changed. Re-running the generation against the same inputs produces a comparable trace, line by line.

The replay surface is part of the platform — not a one-off export. It exists for every memo, every spread row, every classification decision.

Yes — at the span level, not the document level. A paragraph in a credit memo cites the page and line range that supports it. A spread cell cites the figure on the financial statement that backed it. A document classification cites the analyst (or model) decision that placed it in a checklist slot.

All citations are clickable. Hovering or opening a citation surfaces the source document and highlights the span.

CORE is model-agnostic by architecture. Today, the platform routes generations to two pinned model families with stable version identifiers, chosen against measured cite-faithfulness on credit-memo workloads.

Every generation snapshot records the model identifier and version it was produced under. Upgrading the model fleet does not retroactively alter prior outputs — the original snapshot is examiner-replayable on the original model.

Model selection, version pins, and routing rules are documented in the operator handbook and disclosed in implementation.

CORE routes AI like a credit department: section intent, source evidence, credit policy, regulatory context, analyst pair, and supervisor review. The model proposes; the analyst accepts, edits, or rejects; and every decision creates an audit entry.

Where automation-first AI leads with task execution, CORE's posture is examination-first. The platform was built inside an active program-credit operator and tested against examination findings, not against a feature backlog. This is a different center of gravity.

Both platforms have a place. The question for a regulated lender is which posture survives an examination question with no time to prepare.

Yes. CORE ships an AI Architecture Disclosure that covers: model fleet and version pins, prompt families, cite-grounding rules, decline conditions, retraining policy, snapshot schema, and the replay surface contract.

The disclosure is provided to examiners on request and to capital partners during diligence. It is not marketing. It is the document an examiner needs to evaluate the surface they are looking at.

§ Request demo

Demos are 30 minutes. We walk through real deal artifacts, source citations, and the audit trail behind a credit decision. Bring your hardest examination question.

Source-cited AI · Examiner-defensible by design · Live in 60 days